The current state of generative AI

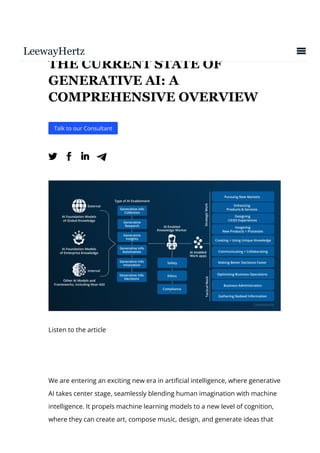

- 1. THE CURRENT STATE OF GENERATIVE AI: A COMPREHENSIVE OVERVIEW Talk to our Consultant Listen to the article We are entering an exciting new era in arti몭cial intelligence, where generative AI takes center stage, seamlessly blending human imagination with machine intelligence. It propels machine learning models to a new level of cognition, where they can create art, compose music, design, and generate ideas that

- 2. leave us in awe. This remarkable technological advancement is not just science 몭ction; it’s the reality we are experiencing today. Over the past year, generative AI has evolved from an intriguing concept to a mainstream technology, commanding attention and attracting investments on a scale unprecedented in its brief history. Generative AI showcases remarkable pro몭ciency in producing coherent text, images, code, and various other impressive outputs based on simple textual prompts. This capability has captivated the world, fueling a growing curiosity that intensi몭es with each iteration of a generative AI model released. It’s worth noting that the true potential of generative AI is far more profound than performing traditional Natural Language Processing tasks. This technology has found a home in a multitude of industries, paving the way for sophisticated algorithms to be distilled into clear, concise explanations. It’s helping us build bots, develop apps, and convey complex academic concepts with unprecedented ease. Creative 몭elds such as animation, gaming, art, cinema, and architecture are experiencing profound changes, spurred on by powerful text-to-image programs like DALL-E, Stable Di몭usion, and Midjourney. We have been laying the groundwork for over a decade for today’s AI. However, it was in the year 2022 that a signi몭cant turning point was reached, marking a pivotal moment in the history of arti몭cial intelligence. It was the year when ChatGPT was launched, ushering in a promising era of human- machine cooperation. As we bask in the radiance of this newfound enlightenment, we are prompted to delve deeper into the reasons behind this sudden acceleration and, more importantly, the path that lies ahead. In this article, we will embark on an expedition to understand the origins, trajectory, and champions of the present-day generative AI landscape. We’ll explore the array of tools that are placing the creative, ideation, development, and production powers of this transformative technology into the hands of users. With industry analysts forecasting a whopping $110

- 3. billion valuation by 2030, there’s no denying that the future of AI is not just generative; it’s transformative. So, join us as we traverse this uncharted territory, tracing the story of the greatest technological evolution of our time. Understanding generative AI Generative Adversarial Networks (GANs) Transformer-based models The evolution of generative AI and its current state Historical context of generative AI development Major achievements and milestones of generative AI Where do we currently stand in generative AI research and development? The state of Large Language Models (LLMs) OpenAI models Google’s GenAI foundation models DeepMind’s Chinchilla model Meta’s LlaMa models The Megatron Turing model by Microsoft & Nvidia GPT-Neo models by Eleuther Hardware and cloud platforms transformation How is generative AI explored in other modalities? How is generative AI driving value across major industries? Customer operations Marketing and Sales Software engineering Research and development Retail and CPG Banking Pharmaceutical and medical The ethical and social considerations and challenges of generative AI Current trends of generative AI

- 4. Understanding generative AI Generative AI refers to a branch of arti몭cial intelligence focused on creating models and systems that have the ability to generate new and original content. These AI models are trained on large datasets and can produce outputs such as text, images, music, and even videos. This transformative technology, underpinned by unsupervised and semi-supervised machine learning algorithms, empowers computers to create original content nearly indistinguishable from the human-created output. To fully appreciate the magic of this innovative technology, it is vital to understand the models that drive it. Here are some important generative AI models: Generative Adversarial Networks (GANs) Generator Random input Real examples Real examples Real examples At the core of generative AI, we 몭nd two main types of models, each with its unique characteristics and applications. First, Generative Adversarial Networks (GANs) excel at generating visual and multimedia content from both text and image data. Invented by Ian Goodfellow and his team in 2014, GANs pit two neural networks, the generator and the discriminator, against each other in a zero-sum game. The generator’s task is to create convincing

- 5. “fake” content from a random input vector, while the discriminator’s role is to distinguish between real samples from the domain and fake ones produced by the generator. The generator and discriminator, typically implemented as Convolutional Neural Networks (CNNs), continuously challenge and learn from each other. When the generator creates a sample so convincing that it fools not only the discriminator but also human perception, the discriminator evolves to get better, ensuring continuous improvement in the quality of generated content. Transformer-based models These deep learning networks are predominantly used in natural language processing tasks. Pioneered by Google in 2017, these networks excel in understanding the context within sequential data. One of the best-known examples is GPT-3, built by the OpenAI team, which produces human-like

- 6. text, crafting anything from poetry to emails, with uncanny authenticity. A transformer model operates in two stages: encoding and decoding. The encoder extracts features from the input sequence, transforming them into vectors representing the input’s semantic and positional aspects. These vectors are then passed to the decoder, which derives context from them to generate the output sequence. By adopting a sequence-to-sequence learning approach, transformers can predict the next item in the sequence, adding context that brings meaning to each item. Key to the success of transformer models is the use of attention or self-attention mechanisms. These techniques add context by acknowledging how di몭erent data elements within a sequence interact with and in몭uence each other. Additionally, the ability of transformers to process multiple sequences in parallel signi몭cantly accelerates the training phase, further enhancing their e몭ectiveness. Partner with LeewayHertz for robust generative AI solutions Our deep domain knowledge and technical expertise allow us to develop e몭cient and e몭ective generative AI solutions tailored to your unique needs. Learn More The evolution of generative AI and its current state Historical context of generative AI development The fascinating journey of generative AI commenced in the 1960s with the pioneering work of Joseph Weizenbaum, who developed ELIZA, the 몭rst-ever chatbot. This early attempt at Natural Language Processing (NLP) sought to simulate human conversation by generating responses based on the text it received. Even though ELIZA was merely a rules-based system, it began a technological evolution in NLP that would unfold over the coming decades.

- 7. The foundation for contemporary generative AI lies in deep learning, a concept dating back to the 1950s. Despite its early inception, the 몭eld of deep learning experienced a slowdown until the 80s and 90s, when it underwent a resurgence powered by the introduction of Arti몭cial Neural Networks (ANNs) and backpropagation algorithms. The advent of the new millennium brought a signi몭cant leap in data availability and computational prowess, turning deep learning from theory to practice. The real turning point arrived in 2012 when Geo몭rey Hinton and his team demonstrated a breakthrough in speech recognition by deploying Convolutional Neural Networks (CNNs). This success was replicated in the realm of image classi몭cation in 2014, propelling substantial advancements in AI research. That same year, Ian Goodfellow unveiled his ground-breaking paper on Generative Adversarial Networks (GANs). His innovative approach involved pitting two networks against each other in a zero-sum game, generating new images that mimicked the training images yet were distinct. This milestone led to further re몭nements in GAN architecture, yielding progressively better image synthesis results. Eventually, these methods started being used in various applications, including music composition. The years that followed saw the emergence of new model architectures like Recurrent Neural Networks (RNNs) for text and video generation, Long Short- term Memory (LSTM) for text generation, transformers for text generation, Variational Autoencoders (VAEs) for image generation, di몭usion models for image generation, and various 몭ow model architectures for audio, image, and video. Parallel advancements in the 몭eld gave rise to Neural Radiance Fields (NeRF) capable of building 3D scenes from 2D images and reinforcement learning that trains agents through reward-based trial and error. More recent achievements in generative AI have been astonishing, from

- 8. creating photorealistic images and convincing deep fake videos to believable audio synthesis and human-like text produced by sophisticated language models like OpenAI’s GPT-1. However, it was only in the latter half of 2022, with the launch of various di몭usion-based image services like MidJourney, Dall-E 2, Stable Di몭usion, and the deployment of OpenAI’s ChatGPT, that generative AI truly caught the attention of the media and mainstream. New services that convert text into video (Make-a-Video, Imagen Video) and 3D representations (DreamFusion, Magic3D & Get3D) also signi몭cantly highlight the power and potential of generative AI to the wider world. Major achievements and milestones Generative AI has witnessed remarkable advancements in recent times, owing to the emergence of powerful and versatile AI models. These advancements are not standalone instances; they are a culmination of scaling models, growing datasets, and enhanced computing power, all interacting to propel the current AI progress. The dawn of the modern AI era dates back to 2012, with signi몭cant progress in deep learning and Convolutional Neural Networks (CNNs). CNNs, although conceptualized in the 90s, became practical only when paired with increased computational capabilities. The breakthrough arrived when Stanford AI researchers presented ImageNet in 2009, an annotated image dataset for training computer vision algorithms. When AlexNet combined CNNs with ImageNet data in 2012, it outperformed its closest competitor by nearly 11%, marking a signi몭cant step forward in computer vision. In 2017, Google’s “Transformer” model bridged a critical gap in Natural Language Processing (NLP), a sector where deep learning had previously struggled. This model introduced a mechanism called “attention,” enabling it to assess the entire input sequence and determine relevance to each output component. This breakthrough transformed how AI approached translation problems and opened up new possibilities for many other NLP

- 9. tasks. Recently, this transformative approach has also been extended to computer vision. The advancements of Transformers led to the introduction of models like BERT and GPT-2 in 2018, which o몭ered novel training capabilities on unstructured data using next-word prediction. These models demonstrated surprising “zero-shot” performance on new tasks, even without prior training. OpenAI continued to push the boundaries by probing the model’s potential to scale and handle increased training data. The major challenge faced by researchers was sourcing the appropriate training data. Although vast amounts of text were available online, creating a signi몭cant and relevant dataset was arduous. The introduction of BERT and the 몭rst iteration of GPT began to leverage this unstructured data, further boosted by the computational power of GPUs. OpenAI took this forward with their GPT-2 and GPT-3 models. These “generative pre-trained transformers” were designed to generate new words in response to input and were pre-trained on extensive text data. Another milestone in these transformer models was the introduction of “몭ne-tuning,” which involved adapting large models to speci몭c tasks or smaller datasets, thus improving performance in a speci몭c domain at a fraction of the computational cost. A prime example would be adapting the GPT-3 model to medical documents, resulting in a superior understanding and extraction of relevant information from medical texts. In 2022, Instruction Tuning emerged as a signi몭cant advancement in the generative AI space. Instruction Tuning involves teaching a model, initially trained for next-word prediction, to follow human instructions and preferences, enabling easier interaction with these Language Learning Models (LLMs). One of the bene몭cial aspects of Instruction Tuning was aligning these models with human values, thereby preventing the generation of undesired or potentially dangerous content. OpenAI implemented a speci몭c technique for instruction tuning known as Reinforcement Learning with Human Feedback (RLHF), wherein human responses trained the model. Further leveraging Instruction Tuning,

- 10. OpenAI introduced ChatGPT, which restructured instruction tuning into a dialogue format, providing an accessible interface for interaction. This paved the way for widespread awareness and adoption of generative AI products, shaping the landscape as we know it today. Where do we currently stand in generative AI research and development? The state of Large Language Models (LLMs) The present state of Large Language Model (LLM) research and development can be characterized as a lively and evolving stage, continuously advancing and adapting. The landscape includes di몭erent actors, such as providers of LLM APIs like OpenAI, Cohere, and Anthropic. On the consumer end, products like ChatGPT and Bing o몭er access to LLMs, simplifying interaction with these advanced models. The speed of innovation in this 몭eld is astonishing, with improvements and novel concepts being introduced regularly. This includes, for instance, the advent of multimodal models that can process and understand both text and images and the ongoing development of Agent models capable of interacting with each other and di몭erent tools. The rapid pace of these developments raises several important questions. For instance: What will be the most common ways for people to interact with LLMs in the future? Which organizations will emerge as the key players in the advancement of LLMs? How fast will the capabilities of LLMs continue to grow? Given the balance between the risk of uncontrolled outputs and the bene몭ts of democratized access to this technology, what is the future of open-source LLMs?

- 11. Here is a table showing the leading LLM models: Company Model Release Date Meta LLaMA February 2023 EleutherAI NeoX February 2022 Meta Galactica November 2022 Cohere Cohere XLarge February 2022 Anthropic AnthropicLM v4s3 April 2022 Google Google LaMDA May 2021 Google GLaM (Mixture of Experts) December 2021 Google Deepmind DeepMind Gopher December 2021 Meta OPT May 2022 Open AI GPT3 June 2020 A121 A121 Jurassic1 August 2021 BigScience Bloom August 2022 Baidu Ernie 3.0 Titan December 2021 Meta LLaMA February 2023 Google PaLM April 2022 Open AI GPT4 March 2023 Google Deepmind DeepMind Chinchilla March 2022 Mosaic MosaicML GPT September 2022 Nvidia & Microsoft MTNLG October 2021 LeewayHertz Partner with LeewayHertz for robust generative AI solutions Our deep domain knowledge and technical expertise allow us to develop e몭cient and e몭ective generative

- 12. AI solutions tailored to your unique needs. Learn More OpenAI’s models Model Function GPT4 Most capable GPT model, able to do complex tasks and optimized for chat GPT 3.5 Turbo Optimized for dialogue and chat, most capable GPT 3.5 model Ada Capable of simple tasks like classi몭cation Davinci Most capable GPT3 model Babbage Fast, lower cost and capable of straightforward tasks Curie Faster, lower cost than Davinci DALL-E Image model Whisper Audio model OpenAI, the entity behind the transformative Generative Pre-trained Transformer (GPT) models, is an organization dedicated to developing and deploying advanced AI technologies. Established as a nonpro몭t entity in 2015 in San Francisco, OpenAI aimed to create Arti몭cial General Intelligence (AGI), which implies the development of AI systems as intellectually competent as human beings. The organization conducts state-of-the-art research across a

- 13. variety of AI domains, including deep learning, natural language processing, computer vision, and robotics, aiming to address real-world issues through its technologies. In 2019, OpenAI made a strategic shift, becoming a capped-pro몭t company. The decision stipulated that investors’ earnings would be limited to a 몭xed multiple of their original investment, as outlined by Sam Altman, the organization’s CEO. According to the Wall Street Journal, the initial funding for OpenAI consisted of $130 million in charitable donations, with Tesla CEO Elon Musk contributing a signi몭cant portion of this amount. Since then, OpenAI has raised approximately $13 billion, a fundraising e몭ort led by Microsoft. This partnership with Microsoft facilitated the development of an enhanced version of Bing and a more interactive suite of Microsoft O몭ce apps, thanks to the integration of OpenAI’s ChatGPT. In 2019, OpenAI unveiled GPT-2, a language model capable of generating remarkably realistic and coherent text passages. This breakthrough was superseded by the introduction of GPT-3 in 2020, a model trained on 175 billion parameters. This versatile language processing tool enables users to interact with the technology without the need for programming language pro몭ciency or familiarity with complex software tools. Continuing this trajectory of innovation, OpenAI launched ChatGPT in November 2022. An upgrade from earlier versions, this model exhibited an improved capacity for generating text that closely mirrors human conversation. In March 2023, OpenAI introduced GPT-4, a model incorporating multimodal capabilities that could process both image and text inputs for text generation. GPT-4 boasts a maximum token count of 32,768 compared to its predecessor, enabling it to generate around 25,000 words. According to OpenAI, GPT-4 demonstrates a 40% improvement in factual response generation and a signi몭cant 82% reduction in the generation of inappropriate content. Google’s GenAI foundation models

- 14. Google AI, the scienti몭c research division under Google, has been at the forefront of promising advancements in machine learning. Its most signi몭cant contribution in recent years is the introduction of the Pathways Language Model (PaLM), which is Google’s largest publicly disclosed model to date. This model is a major component of Google’s recently launched chatbot, Bard. PaLM has formed the foundation of numerous Google initiatives, including the instruction-tuned model known as PaLM-Flan and the innovative multimodal model PaLM-E. This latter model is recognized as Google’s 몭rst “embodied” multimodal language model, incorporating both text and visual cues. The training process for PaLM used a broad text corpus in a self-supervised learning approach. This included a mixture of multilingual web pages (27%), English literature (13%), open-source code from GitHub repositories (5%), multilingual Wikipedia articles (4%), English news articles (1%), and various social media conversations (50%). This expansive data set facilitated the exceptional performance of PaLM, enabling it to surpass previous models like GPT-3 and Chinchilla in 28 out of 29 NLP tasks in the few-shot performance. PaLM variants can scale up to an impressive 540 billion parameters, signi몭cantly more than GPT-3’s 175 billion. The model was trained on 780 billion tokens, again outstripping GPT-3’s 300 billion. The training process consumed approximately 8x more computational power than GPT-3. However, it’s noteworthy that this is likely considerably less than what’s required for training GPT-4. PaLM’s training was conducted across multiple TPU v4 pods, harnessing the power of Google’s dense decoder-only Transformer model. Google researchers optimized the use of their Tensor Processing Unit (TPU) chips by using 3072 TPU v4 chips linked to 768 hosts across two pods for

- 15. each training cycle. This con몭guration facilitated large-scale training without the necessity for pipeline parallelism. Google’s proprietary Pathways system allowed the seamless scaling of the model across its numerous TPUs, demonstrating the capacity for training ultra-large models like PaLM. Central to this technological breakthrough is Google’s latest addition, PaLM 2, which was grandly introduced at the I/O 2023 developer conference. Touted by Google as a pioneering language model, PaLM 2 is equipped with enhanced features and forms the backbone of more than 25 new products, e몭ectively demonstrating the power of multifaceted AI models. Broadly speaking, Google’s GenAI suite comprises four foundational models, each specializing in a unique aspect of generative AI: 1. PaLM 2: Serving as a comprehensive language model, PaLM 2 is trained across more than 100 languages. Its capabilities extend to text processing, sentiment analysis, and classi몭cation tasks, among others. Google’s design enables it to comprehend, create, and translate complex text across multiple languages, tackling everything from idioms and poetry to riddles. The model’s advanced capabilities even stretch to logical reasoning and solving intricate mathematical equations. 2. Codey: Codey is a foundational model speci몭cally crafted to boost developer productivity. It can be incorporated into a standard development kit (SDK) or an application to streamline code generation and auto- completion tasks. To enhance its performance, Codey has been meticulously optimized and 몭ne-tuned using high-quality, openly licensed code from a variety of external sources. 3. Imagen: Imagen is a text-to-image foundation model enabling organizations to generate and tailor studio-quality images. This innovative model can be leveraged by developers to create or modify images, opening up a plethora of creative possibilities. 4. Chirp: Chirp is a specialized foundation model trained to convert speech to text. Compatible with various languages, it can be used to generate accurate

- 16. captions or to develop voice assistance capabilities, thus enhancing accessibility and user interaction. Each of these models forms a pillar of Google’s GenAI stack, demonstrating the breadth and depth of Google’s AI capabilities. DeepMind’s Chinchilla model DeepMind Technologies, a UK-based arti몭cial intelligence research lab established in 2010, came under the ownership of Alphabet Inc. in 2015, following its acquisition by Google in 2014. A signi몭cant achievement of DeepMind is the development of a neural network, or a Neural Turing machine, that aims to emulate the human brain’s short-term memory. DeepMind has an impressive track record of accomplishments. Its AlphaGo program made history in 2016 by defeating a professional human Go player, while the AlphaZero program overcame the most pro몭cient software in Go and Shogi games using reinforcement learning techniques. In 2020, DeepMind’s AlphaFold took signi몭cant strides in solving the protein folding problem and by July 2022, it had made predictions for over 200 million protein structures. The company continued its streak of innovation with the launch of Flamingo, a uni몭ed visual language model capable of describing any image, in April 2022. Subsequently, in July 2022, DeepMind announced DeepNash, a model-free multi-agent reinforcement learning system. Among DeepMind’s impressive roster of AI innovations is the Chinchilla AI language model, which was introduced in March 2022. The claim to fame of this model is its superior performance over GPT-3. A signi몭cant revelation in the Chinchilla paper was that prior LLMs had been trained on insu몭cient data. An ideal model of a given parameter size should utilize far more training data than GPT-3. Although gathering more training data increases time and costs, it leads to more e몭cient models with a smaller parameter size, o몭ering huge bene몭ts for inference costs. These costs, associated with operating and using the 몭nished model, scale with parameter size.

- 17. With 70 billion parameters, which is 60% smaller than GPT-3, Chinchilla was trained on 1,400 tokens, 4.7 times more than GPT-3. Chinchilla AI demonstrated an average accuracy rate of 67.5% on Measuring Massive Multitask Language Understanding (MMLU) and outperformed other major LLM platforms like Gopher (280B), GPT-3 (175B), Jurassic-1 (178B), and Megatron-Turing NLG (300 parameters and 530B parameters) across a wide array of downstream evaluation tasks. Meta’s LlaMa models Meta AI, previously recognized as Facebook Arti몭cial Intelligence Research (FAIR), is an arti몭cial intelligence lab renowned for its contributions to the open-source community, including frameworks, tools, libraries, and models to foster research exploration and facilitate large-scale production deployment. A signi몭cant milestone in 2018 was the release of PyText, an open-source modeling framework designed speci몭cally for Natural Language Processing (NLP) systems. Meta further pushed boundaries with the introduction of BlenderBot 3 in August 2022, a chatbot designed to improve conversational abilities and safety measures. Moreover, the development of Galactica, a large language model launched in November 2022, has aided scientists in summarizing academic papers and annotating molecules and proteins. Emerging in February 2023, LLaMA (Large Language Model Meta AI) represents Meta’s entry into the sphere of transformer-based large language models. This model has been developed with the aim of supporting the work of researchers, scientists, and engineers in exploring various AI applications. To mitigate potential misuse, LLaMA will be distributed under a non- commercial license, with access granted selectively on a case-by-case basis to academic researchers, government-a몭liated individuals and organizations, civil society, academia, and industry research facilities. By sharing codes and weights, Meta allows other researchers to explore and test new approaches in the realm of LLMs.

- 18. The LLaMA models boast a range of 7 billion to 65 billion parameters, positioning LLaMA-65B in the same league as DeepMind’s Chinchilla and Google’s PaLM. The training of these models involved the use of publicly available unlabeled data, which necessitates fewer computing resources and power for smaller foundational models. The larger variants, LLaMA-65B and 33B, were trained on 1.4 trillion tokens across 20 di몭erent languages. According to the FAIR team, the model’s performance varies across languages. Training data sources encompassed a diverse range, including CCNet (67%), GitHub, Wikipedia, ArXiv, Stack Exchange, and books. However, like other large-scale language models, LLaMA is not without issues, including biased and toxic generation and hallucination. Partner with LeewayHertz for robust generative AI solutions Our deep domain knowledge and technical expertise allow us to develop e몭cient and e몭ective generative AI solutions tailored to your unique needs. Learn More The Megatron Turing model by Microsoft & Nvidia Nvidia, a pioneer in the AI industry, is renowned for its expertise in developing Graphics Processing Units (GPUs) and Application Programming Interfaces (APIs) for a broad range of applications, including data science, high-performance computing, mobile computing, and automotive systems. With its forefront presence in AI hardware and software production, Nvidia plays an integral role in shaping the AI landscape. In 2021, Nvidia’s Applied Deep Learning Research team introduced the groundbreaking Megatron-Turing model. Encompassing a staggering 530 billion parameters and trained on 270 billion tokens, this model

- 19. demonstrates the company’s relentless pursuit of innovation in AI. To promote accessibility and practical use, Nvidia o몭ers an Early Access program for its MT-NLG model through its managed API service, enabling researchers and developers to tap into the power of this model. Further cementing its commitment to advancing AI, Nvidia launched the DGX Cloud platform. This platform opens doors to a myriad of Nvidia’s Large Language Models (LLMs) and generative AI models, o몭ering users seamless access to these state-of-the-art resources. GPT-Neo models by Eleuther EleutherAI, established in July 2020 by innovators Connor Leahy, Sid Black, and Leo Gao, is a non-pro몭t research laboratory specializing in arti몭cial intelligence. The organization has gained recognition in the 몭eld of large- scale Natural Language Processing (NLP) research, with particular emphasis on understanding and aligning massive models. EleutherAI strives to democratize the study of foundational models, fostering an open science culture within NLP and raising awareness about these models’ capabilities, limitations, and potential hazards. The organization has undertaken several remarkable projects. In December 2020, they created ‘the Pile,’ an 800GiB dataset, to train Large Language Models (LLMs). Following this, they unveiled GPT-Neo models in March 2021, and in June of the same year, they introduced GPT-J-6B, a 6 billion parameter language model, which was the most extensive open-source model of its kind at that time. Moreover, EleutherAI has also combined CLIP and VQGAN to build a freely accessible image generation model, thus founding Stability AI. Collaborating with the Korean NLP company TUNiB, EleutherAI has also trained language models in various languages, including Polyglot-Ko. The organization initially relied on Google’s TPU Research Cloud Program for its computing needs. However, by 2021, they transitioned to CoreWeave for funding. They also utilize TensorFlow Research Cloud for more cost-e몭ective computational resources. February 2022 saw the release of the GPT-NeoX-

- 20. 20b model, becoming the largest open-source language model at the time. In January 2023, EleutherAI formalized its status as a non-pro몭t research institute. GPT-NeoX-20B, EleutherAI’s 몭agship NLP model, trained on 20 billion parameters, was developed using the company’s GPT-NeoX framework and CoreWeave’s GPUs. It demonstrated a 72% accuracy on the LAMBADA sentence completion task and an average 28.98% zero-shot accuracy on the Hendrycks Test Evaluation for Stem. The Pile dataset for the model’s training comprises data from 22 distinct sources spanning 몭ve categories: academic writing, web resources, prose, dialogue, and miscellaneous sources. EleutherAI’s GPT-NeoX-20B, a publicly accessible and pre-trained autoregressive transformer decoder language model, stands out as a potent few-shot reasoner. It comprises 44 layers, a hidden dimension size of 6144, and 64 heads. It also incorporates 1.1. Rotary Positional Embeddings, o몭ering a deviation from learned positional embeddings commonly found in GPT models. Hardware and cloud platforms transformation The advent of generative AI has considerably in몭uenced the evolution of hardware and the cloud landscape. Recognizing the processing power needed to train and run these complex AI models, companies like Nvidia have developed powerful GPUs like the ninth-generation H100 Tensor Core. Boasting 80 billion transistors, this GPU is speci몭cally designed to optimize large-scale AI and High-performance Computing (HPC) models, following the success of its predecessor, the A100, in the realm of deep learning. Meanwhile, Google, with its Tensor Processing Units (TPUs) – custom- designed accelerator application-speci몭c integrated circuits (ASICs) – has played a critical role in this transformation. These TPUs, developed speci몭cally for e몭cient machine learning tasks, are closely integrated with TensorFlow, Google’s machine learning framework. Google Cloud Platform

- 21. has further embraced generative AI by launching its TPU v4 on Cloud, purpose-built for accelerating NLP workloads and developing TPU v5 for its internal applications. Microsoft Azure has responded to the call for generative AI by providing GPU instances powered by Nvidia GPUs, such as the A100 and P40, tailored for various machine learning and deep learning workloads. Their partnership with OpenAI has enabled the training of advanced generative models like GPT-3 and GPT-4 and made them accessible to developers through Azure’s cloud infrastructure. On the other hand, Amazon Web Services (AWS) o몭er potent GPU-equipped instances like the Amazon Elastic Compute Cloud (EC2) P3 instances. They are armed with Nvidia V100 GPUs, o몭ering over 5,000 CUDA cores and an impressive 300 GB of GPU memory. AWS has also designed its own chips, Inferentia for inference tasks and Trainium for training tasks, thus catering to the computational demands of generative AI. This transformation in hardware and cloud landscapes has also facilitated the creation of advanced models like BERT, RoBERTa, Bloom, Megatron, and the GPT series. Among them, BERT and RoBERTa, both trained using transformer architecture, have delivered impressive results across numerous NLP tasks, while Bloom, an openly accessible multilingual language model, was trained on an impressive 384 A100–80GB GPUs. How is generative AI explored in other modalities? Image generation: State-of-the-art tools for image manipulation have emerged due to the amalgamation of powerful models, vast datasets, and robust computing capabilities. OpenAI’s DALL-E, an AI system that generates images from textual descriptions, exempli몭es this. DALL-E can generate unique, high-resolution images and manipulate existing ones by utilizing a modi몭ed version of the GPT-3 model. Despite certain challenges, such as algorithmic biases stemming from its training on public datasets, it’s a notable player in the space. Midjourney, an AI program by an

- 22. independent research lab, allows users to generate images through Discord bot commands, enhancing user interactivity. The Stable Di몭usion model by Stability AI is another key player, with its capabilities for image manipulation and translation from the text. This model has been made accessible through an online interface, DreamStudio, which o몭ers a range of user-friendly features. Audio generation: OpenAI’s Whisper, Google’s AudioLM, and Meta’s AudioGen are signi몭cant contributors to the domain of audio generation. Whisper is an automatic speech recognition system that supports a multitude of languages and tasks. Google’s AudioLM and Meta’s AudioGen, on the other hand, utilize language modeling to generate high-quality audio, with the latter being able to convert text prompts into sound 몭les. Search engines: Neeva and You.com are AI-powered search engines prioritize user privacy while delivering curated, synthesized search results. Neeva leverages AI to provide concise answers and enables users to search across their personal email accounts, calendars, and cloud storage platforms. You.com categorizes search results based on user preferences and allows users to create content directly from the search results. Code generation: GitHub Copilot is transforming the world of software development by integrating AI capabilities into coding. Powered by a massive repository of source code and natural language data, GitHub Copilot provides personalized coding suggestions, tailored to the developer’s unique style. Furthermore, it o몭ers context-sensitive solutions, catering to the speci몭c needs of the coding environment. Impressively, GitHub Copilot can generate functional code across a variety of programming languages, e몭ectively becoming an invaluable asset to any developer’s toolkit. Text generation: Jasper.AI is a subscription-based text generation model that requires minimal user input. It can generate various text types, from product descriptions to email subject lines. However, it does have limitations, such as a lack of fact-checking and citation of sources. The rapid rise of consumer-facing generative AI is a testament to its

- 23. transformative potential across industries. As these technologies continue to evolve, they will play an increasingly crucial role in shaping our digital future. How is generative AI driving value across major industries? Total, % of Industry Revenue Administrative & Professional Services 0.91.4 150250 Total, $ Billion 760 1,200 340 470 230 420 580 1,200 280 530 180 260 120 260 40 50 60 90 Advance Electronics & Semiconductors 100170 1.32.3 Advanced Manufacturing 170290 1.42.4 Agriculture 4070 0.61.0 Banking 200340 2.84.7 Basic Materials 120200 0.71.2 Chemical 80140 0.81.3 Construction 90150 0.71.2 Consumer Packaged Goods 160270 1.42.3 Education 120230 2.24.0 Energy 150240 1.01.6 Healthcare 150250 1.83.2 Sign Tech 240460 4.89.3 Insurance 5070 1.82.8 Media and Entertainment 60110 1.52.6 Pharmaceuticals & Medical Products 60110 2.64.5 Public and Social Sector 70110 0.50.9 Real Estate 110180 1.01.7 Retail 240390 1.21.9 Marketing & Sales Customer Operations Product & R&D Software Engineering Supply Chain & Operations Risk & Legal Strategy & Finance Corporate IT 2 Talent & Organization Low Impact High Impact

- 24. 2,6004,400 Telecommunications 60100 2.33.7 Travel, Transport, & Logistics 180300 1.22.0 LeewayHertz Image reference – McKinsey Let us explore the potential operational advantages of generative AI by functioning as a virtual specialist across various applications. Customer operations Generative AI holds the potential to transform customer operations substantially, enhancing customer experience and augmenting agent pro몭ciency through digital self-service and skill augmentation. The technology has already found a 몭rm footing in customer service because it can automate customer interactions via natural language processing. Here are a few examples showcasing the operational enhancements that generative AI can bring to speci몭c use cases: Customer self-service: Generative AI-driven chatbots can deliver immediate and personalized responses to complex customer queries, independent of the customer’s language or location. Generative AI could allow customer service teams to handle queries that necessitate human intervention by elevating the quality and e몭ciency of interactions through automated channels. Our research revealed that approximately half of the customer contacts in sectors like banking, telecommunications, and utilities in North America are already managed by machines, including AI. We project that generative AI could further reduce the quantity of human-handled contacts by up to 50 percent, contingent upon a company’s current automation level. Resolution during the 몭rst contact: Generative AI can promptly access data speci몭c to a customer, enabling a human customer service representative

- 25. to address queries and resolve issues more e몭ectively during the 몭rst interaction. Reduced response time: Generative AI can decrease the time a human sales representative takes to respond to a customer by o몭ering real-time assistance and suggesting subsequent actions. Increased sales: Leveraging its capability to analyze customer data and browsing history swiftly, the technology can identify product suggestions and o몭ers tailored to customer preferences. Moreover, generative AI can enhance quality assurance and coaching by drawing insights from customer interactions, identifying areas of improvement, and providing guidance to agents. As per an estimation report by McKinsey, applying generative AI to customer care functions could cause signi몭cant productivity improvements, translating into cost savings that could range from 30 to 45 percent of current function costs. However, their analysis only considers the direct impact of generative AI on the productivity of customer operations. It does not factor in the potential secondary e몭ects on customer satisfaction and retention that could arise from an enhanced experience, including a deeper understanding of the customer’s context that could aid human agents in providing more personalized assistance and recommendations. Partner with LeewayHertz for robust generative AI solutions Our deep domain knowledge and technical expertise allow us to develop e몭cient and e몭ective generative AI solutions tailored to your unique needs. Learn More Marketing and sales

- 26. Generative AI has swiftly permeated marketing and sales operations, where text-based communications and large-scale personalization are primary drivers. This technology can generate personalized messages tailored to each customer’s speci몭c interests, preferences, and behaviors. It can even create preliminary drafts of brand advertising, headlines, slogans, social media posts, and product descriptions. However, the introduction of generative AI into marketing operations demands careful planning. For instance, there are potential risks of infringing intellectual property rights when AI models trained on publicly available data without su몭cient safeguards against plagiarism, copyright violations, and branding recognition are utilized. Moreover, a virtual try-on application might produce biased representations of certain demographics due to limited or skewed training data. Therefore, substantial human supervision is required for unique conceptual and strategic thinking pertinent to each company’s needs. Potential operational advantages that generative AI can provide for marketing include the following: E몭cient and e몭ective content creation: Generative AI can signi몭cantly expedite the ideation and content drafting process, saving time and e몭ort. It can also ensure a consistent brand voice, writing style, and format across various content pieces. The technology can integrate ideas from team members into a uni몭ed piece, enhancing the personalization of marketing messages targeted at diverse customer segments, geographies, and demographics. Mass email campaigns can be translated into multiple languages with varying imagery and messaging tailored to the audience. This ability of generative AI could enhance customer value, attraction, conversion, and retention at a scale beyond what traditional techniques allow. Enhanced data utilization: Generative AI can help marketing functions overcome unstructured, inconsistent, and disconnected data challenges. It

- 27. can interpret abstract data sources such as text, images, and varying structures, helping marketers make better use of data like territory performance, synthesized customer feedback, and customer behavior to formulate data-informed marketing strategies. SEO optimization: Generative AI can assist marketers in achieving higher conversion and lower costs via Search Engine Optimization (SEO) for various technical components such as page titles, image tags, and URLs. It can synthesize key SEO elements, aid in creating SEO-optimized digital content, and distribute targeted content to customers. Product discovery and search personalization: Generative AI can personalize product discovery and searches based on multimodal inputs from text, images, speech, and a deep understanding of customer pro몭les. Technology can utilize individual user preferences, behavior, and purchase history to facilitate the discovery of the most relevant products and generate personalized product descriptions. McKinsey’s estimations indicate that generative AI could boost the productivity of the marketing function, creating a value between 5 and 15 percent of total marketing expenditure. Additionally, generative AI could signi몭cantly change the sales approach of both B2B and B2C companies. Here are two potential use cases for sales: Increase sales probability: Generative AI could identify and prioritize sales leads by forming comprehensive consumer pro몭les from structured and unstructured data, suggesting actions to sta몭 to enhance client engagement at every point of contact. Improve lead development: Generative AI could assist sales representatives in nurturing leads by synthesizing relevant product sales information and customer pro몭les. It could create discussion scripts to facilitate customer conversation, automate sales follow-ups, and passively nurture leads until clients are ready for direct interaction with a human sales agent.

- 28. McKinsey’s analysis proposes that the implementation of generative AI could boost sales productivity by approximately 3 to 5 percent of current global sales expenditures. This technology could also drive value by partnering with workers, enhancing their work, and accelerating productivity. By rapidly processing large amounts of data and drawing conclusions, generative AI can provide insights and options that can signi몭cantly enhance knowledge work, speed up product development processes, and allow employees to devote more time to tasks with a higher impact. Software engineering Viewing computer languages as another form of language opens up novel opportunities in software engineering. Software engineers can employ generative AI for pair programming and augmented coding and can train large language models to create applications that generate code in response to a natural-language prompt describing the desired functionality of the code. Software engineering plays a crucial role in most companies, a trend that continues to expand as all large enterprises, not just technology giants, incorporate software into a broad range of products and services. For instance, a signi몭cant portion of the value of new vehicles derives from digital features such as adaptive cruise control, parking assistance, and Internet of Things (IoT) connectivity. The direct impact of AI on software engineering productivity could be anywhere from 20 to 45 percent of the current annual expenditure on this function. This value would primarily be derived from reducing the time spent on certain activities, like generating initial code drafts, code correction and refactoring, root-cause analysis, and creating new system designs. By accelerating the coding process, generative AI could shift the skill sets and capabilities needed in software engineering toward code and architecture design. One study discovered that software developers who used Microsoft’s

- 29. GitHub Copilot completed tasks 56 percent faster than those who did not use the tool. Moreover, an empirical study conducted internally by McKinsey on software engineering teams found that those trained to use generative AI tools rapidly decreased the time required to generate and refactor code. Engineers also reported a better work experience, citing improvements in happiness, work몭ow, and job satisfaction. Large technology companies are already marketing generative AI for software engineering, including GitHub Copilot, now integrated with OpenAI’s GPT-4, and Replit, used by over 20 million coders. Research and development The potential of generative AI in Research and Development (R&D) may not be as readily acknowledged as in other business functions, yet studies suggest that this technology could yield productivity bene몭ts equivalent to 10 to 15 percent of total R&D expenses. For instance, industries such as life sciences and chemicals have started leveraging generative AI foundation models in their R&D processes for generative design. These foundation models can generate candidate molecules, thereby accelerating the development of new drugs and materials. Entos, a biotech pharmaceutical company, has paired generative AI with automated synthetic development tools to design small-molecule therapeutics. However, the same principles can be employed in the design of many other products, including large-scale physical items and electrical circuits, among others. While other generative design techniques have already unlocked some potential to implement AI in R&D, their costs and data requirements, such as using “traditional” machine learning, can restrict their usage. Pretrained foundation models that support generative AI, or models enhanced via 몭ne- tuning, have wider application scopes compared to models optimized for a single task. Consequently, they can hasten time-to-market and expand the

- 30. types of products to which generative design can be applied. However, foundation models lack the capabilities to assist with product design across all industries. Besides the productivity gains from quickly generating candidate designs, generative design can also enhance the designs themselves. Here are some examples of the operational improvements generative AI could bring: Enhanced design: Generative AI can assist product designers in reducing costs by selecting and using materials more e몭ciently. It can also optimize manufacturing designs, leading to cost reductions in logistics and production. Improved product testing and quality: Using generative AI in generative design can result in a higher-quality product, increasing attractiveness and market appeal. Generative AI can help to decrease the testing time for complex systems and expedite trial phases involving customer testing through its ability to draft scenarios and pro몭le testing candidates. It also identi몭ed a new R&D use case for non-generative AI: deep learning surrogates, which can be combined with generative AI to produce even greater bene몭ts. Integration of these technologies will necessitate the development of speci몭c solutions, but the value could be considerable because deep learning surrogates have the potential to accelerate the testing of designs proposed by generative AI. Retail and CPG Generative AI holds immense potential for driving value in the retail and Consumer Packaged Goods (CPG) sector. It is estimated that the technology could enhance productivity by 1.2 to 2.0 percent of annual revenues, translating to an additional value of $400 billion to $660 billion. This enhancement could come from automating key functions such as customer service, marketing and sales, and inventory and supply chain management. The retail and CPG industries have relied on technology for several decades.

- 31. Traditional AI and advanced analytics have helped companies manage vast amounts of data across numerous SKUs, complex supply chains, warehousing networks, and multifaceted product categories. With highly customer-facing industries, generative AI can supplement existing AI capabilities. For example, generative AI can personalize o몭erings to optimize marketing and sales activities already managed by existing AI solutions. It also excels in data management, potentially supporting existing AI-driven pricing tools. Some retail and CPG companies have already begun leveraging generative AI. For instance, technology can improve customer interaction by personalizing experiences based on individual preferences. Companies like Stitch Fix are experimenting with AI tools like DALL·E to suggest style choices based on customers’ color, fabric, and style preferences. Retailers can use generative AI to provide next-generation shopping experiences, gaining a signi몭cant competitive edge in an era where customers expect natural-language interfaces to select products. In customer care, generative AI can be combined with existing AI tools to improve chatbot capabilities, enabling them to mimic human agents better. Automating repetitive tasks will allow human agents to focus on complex customer problems, resulting in improved customer satisfaction, increased tra몭c, and brand loyalty. Generative AI also brings innovative capabilities to the creative process. It can help with copywriting for marketing and sales, brainstorming creative marketing ideas, speeding up consumer research, and accelerating content analysis and creation. However, integrating generative AI in retail and CPG operations has certain considerations. The emergence of generative AI has increased the need to understand whether the generated content is fact-based or inferred, demanding a new level of quality control. Also, foundation models are a

- 32. prime target for adversarial attacks, increasing potential security vulnerabilities and privacy risks. To address these concerns, companies will need to strategically keep humans in the loop and prioritize security and privacy during any implementation. They will need to institute new quality checks for processes previously managed by humans, such as emails written by customer reps, and conduct more detailed quality checks on AI-assisted processes, such as product design. Thus, as the economic potential of generative AI unfolds, retail and CPG companies need to harness its capabilities strategically while managing the inherent risks. Banking Generative AI is poised to create signi몭cant value in the banking industry, potentially boosting productivity by 2.8 to 4.7 percent of the industry’s annual revenues, an additional $200 billion to $340 billion. Alongside this, it could enhance customer satisfaction, improve decision-making processes, uplift the employee experience, and mitigate risks by enhancing fraud and risk monitoring. Banking has already experienced substantial bene몭ts from existing AI applications in marketing and customer operations. Given the text-heavy nature of regulations and programming languages in the sector, generative AI can deliver additional bene몭ts. This potential is further ampli몭ed by certain characteristics of the industry, such as sustained digitization e몭orts, large customer-facing workforces, stringent regulatory requirements, and the nature of being a white-collar industry. Banks have already begun harnessing generative AI in their front lines and software activities. For instance, generative AI bots trained on proprietary knowledge can provide constant, in-depth technical support, helping frontline workers access data to improve customer interactions. Morgan Stanley is building an AI assistant with the same technology to help wealth

- 33. managers swiftly access and synthesize answers from a massive internal knowledge base. Generative AI can also signi몭cantly reduce back-o몭ce costs. Customer-facing chatbots could assess user requests and select the best service expert based on topic, level of di몭culty, and customer type. Service professionals could use generative AI assistants to access all relevant information to address customer requests rapidly and instantly. Generative AI tools are also bene몭cial for software development. They can draft code based on context, accelerate testing, optimize the integration and migration of legacy frameworks, and review code for defects and ine몭ciencies. This results in more robust, e몭ective code. Furthermore, generative AI can signi몭cantly streamline content generation by drawing on existing documents and data sets. It can create personalized marketing and sales content tailored to speci몭c client pro몭les and histories. Also, generative AI could automatically produce model documentation, identify missing documentation, and scan relevant regulatory updates, creating alerts for relevant shifts. Pharmaceutical and medical Generative AI holds the potential to signi몭cantly in몭uence the pharmaceutical and medical-product industries, with an anticipated impact between $60 billion to $110 billion annually. This signi몭cant potential stems from the laborious and resource-intensive process of new drug discovery, where pharmaceutical companies spend approximately 20 percent of revenues on R&D, and new drug development takes around ten to 15 years on average. Therefore, enhancing the speed and quality of R&D can yield substantial value. For instance, the lead identi몭cation stage in drug discovery involves identifying a molecule best suited to address the target for a potential new drug, which can take several months with traditional deep learning

- 34. techniques. Generative AI and foundation models can expedite this process, completing it in just a few weeks. Two key use cases for generative AI in the industry include improving the automation of preliminary screening and enhancing indication 몭nding. During the lead identi몭cation stage, scientists can employ foundation models to automate the preliminary screening of chemicals. They seek chemicals that will have speci몭c e몭ects on drug targets. The foundation models allow researchers to cluster similar experimental images with higher precision than traditional models, facilitating the selection of the most promising chemicals for further analysis. Identifying and prioritizing new indications for a speci몭c medication or treatment is critical in the indication-몭nding phase of drug discovery. Foundation models allow researchers to map and quantify clinical events and medical histories, establish relationships, and measure the similarity between patient cohorts and evidence-backed indications. This results in a prioritized list of indications with a higher probability of success in clinical trials due to their accurate matching with suitable patient groups. Pharmaceutical companies that have used this approach report high success rates in clinical trials for the top 몭ve indications recommended by a foundation model for a tested drug. Consequently, these drugs progress smoothly into Phase 3 trials, signi몭cantly accelerating drug development. The ethical and social considerations and challenges of Generative AI Generative AI brings along several ethical and social considerations and challenges, including: Fairness: Generative AI models might unintentionally produce biased results because of imperfect training data or decisions made during their development.

- 35. Intellectual Property (IP): Training data and model outputs can pose signi몭cant IP challenges, possibly infringing on copyrighted, trademarked, or patented materials. Users of generative AI tools must understand the data used in training and how it’s utilized in the outputs. Privacy: Privacy risks may occur if user-fed information is identi몭able in model outputs. Generative AI might be exploited to create and spread malicious content, including disinformation, deepfakes, and hate speech. Security: Cyber attackers could harness generative AI to increase the speed and sophistication of their attacks. Generative AI is also susceptible to manipulation, resulting in harmful outputs. Explainability: Generative AI uses neural networks with billions of parameters, which poses challenges in explaining how a particular output is produced. Reliability: Generative AI models can generate varying answers to the same prompts, which could hinder users from assessing the accuracy and reliability of the outputs. Organizational impact: Generative AI may signi몭cantly a몭ect workforces, potentially causing a disproportionately negative impact on speci몭c groups and local communities. Social and environmental impact: Developing and training generative AI models could lead to adverse social and environmental outcomes, including increased carbon emissions. Hallucination: Generative AI models, like ChatGPT, can struggle when they lack su몭cient information to provide meaningful responses, leading to the creation of plausible yet 몭ctitious sources. Bias: Generative AI might exhibit cultural, con몭rmation, and authority biases, which users need to be aware of when considering the reliability of the AI’s output. Incomplete data: Even the latest models, like GPT-4, lack recent content in their training data, limiting their ability to generate content based on recent events.

- 36. Generative AI’s ethical, democratic, environmental, and social risks should be thoroughly considered. Ethically, it can generate a large volume of unveri몭able information. Democratically, it can be exploited for mass disinformation or cyberattacks. Environmentally, it can contribute to increased carbon emissions due to high computational demands. Socially, it might render many professional roles obsolete. These multifaceted challenges underscore the importance of managing generative AI responsibly. Partner with LeewayHertz for robust generative AI solutions Our deep domain knowledge and technical expertise allow us to develop e몭cient and e몭ective generative AI solutions tailored to your unique needs. Learn More Current trends of generative AI Coordination with Multiple Agents Estimates PostRecent Median Top Quartile Line Represents Range Of Export Estimates Top Quartile Median Estimates PreGenerative AI (2017)1 Estimates AI Developments (2023)1 2010 2020 2030 2040 2050 2060 2070 2080 Creativity Logical Reasoning & Problem Solving NaturalLanguage Generation NaturalLanguage Understanding Output Articulation & Presentation Generating Novel Patterns & Categories Sensory Perception

- 37. Sensory Perception Social & Emotional Output Social & Emotional Reasoning Social & Emotional Sensing LeewayHertz Image reference – McKinsey Prompts-based creation: Generative AI’s impressive applications in art, music, and natural language processing are causing a growing demand for skills in prompt engineering. Companies can transform content production by enhancing user experience via prompt-based creation tools. However, IT decision-makers must ensure data and information security while utilizing these tools. API integration to enterprise applications: While the spotlight is currently on chat functionalities, APIs will increasingly simplify the integration of generative AI capabilities into enterprise applications. These APIs will empower all kinds of applications, ranging from mobile apps to enterprise software, to leverage generative AI for value addition. Tech giants such as Microsoft and Salesforce are already exploring innovative ways to integrate AI into their productivity and CRM apps. Business process transformation: The continuous advancement of generative AI will likely lead to the automation or augmentation of daily tasks, enabling businesses to rethink their processes and extend the capabilities of their workforce. This evolution can give rise to novel business models and experiences that allow small businesses to appear bigger and large corporations to operate more nimbly. Advancement in healthcare: Generative AI can potentially enhance patient outcomes and streamline tasks for healthcare professionals. It can digitalize medical documents for e몭cient data access, improve personalized medicine by organizing various medical and genetic information, and o몭er intelligent transcription to save time and simplify

- 38. complex data. It can also boost patient engagement by o몭ering personalized recommendations, medication reminders, and better symptom tracking. Evolution of synthetic data: Improvements in generative AI technology can help businesses harness imperfect data, addressing privacy issues and regulations. Using generative AI in creating synthetic data can accelerate the development of new AI models, boost decision-making capabilities, and enhance organizational agility. Optimized scenario planning: Generative AI can potentially improve large- scale macroeconomic or geopolitical events simulations. With ongoing supply chain disruptions causing long-lasting e몭ects on organizations and the environment, better simulations of rare events could help mitigate their adverse impacts cost-e몭ectively. Reliability through hybrid models: The future of generative AI might lie in combining di몭erent models to counter the inaccuracies in large language models. Hybrid models fusing LLMs’ bene몭ts with accurate narratives from symbolic AI can drive innovation, productivity, and e몭ciency, particularly in regulated industries. Tailored generative applications: We can expect a surge in personalized generative applications that adapt to individual users’ preferences and behaviors. For instance, personalized learning or music applications can optimize content delivery based on a user’s history, mood, or learning style. Domain-speci몭c applications: Generative AI can provide tailored solutions for speci몭c domains, like healthcare or customer service. Industry-speci몭c insights and automation can signi몭cantly improve work몭ows. For IT decision-makers, the focus will shift towards identifying high-quality data for training purposes and enhancing operational and reputational safety. Intuitive natural language interfaces: Generative AI is poised to foster the development of Natural Language Interfaces (NLIs), making system interactions more user-friendly. For instance, workers can interact with NLIs in a warehouse setting through headsets connected to an ERP system,

- 39. reducing errors and boosting e몭ciency. Endnote Generative AI stands at the forefront of technology, potentially rede몭ning numerous facets of our existence. However, as with any growing technology, the path to its maturity comes with certain hurdles. A key challenge lies in the vast datasets required for developing these models, alongside the substantial computational power necessary for processing such information. Additionally, the costs associated with training generative models, particularly large language models (LLMs), can be signi몭cant, posing a barrier to widespread accessibility. Despite these challenges, the progress made in the 몭eld is undeniable. Studies indicate that while large language models have shown impressive results, smaller, targeted datasets still play a pivotal role in boosting LLM performance for domain-speci몭c tasks. This approach could streamline the resource-intensive process associated with these models, making them more cost-e몭ective and manageable. As we progress further, it’s imperative to remain mindful of the security and safety implications of generative AI. Leading entities in the 몭eld are adopting human feedback mechanisms early in the model development process to ensure safer outcomes. Moreover, the emergence of open-source alternatives paves the way for increased access to next-generation LLM models. This democratization bene몭ts practitioners and empowers independent scientists to push the boundaries of what’s possible with generative AI. In conclusion, the current state of generative AI is 몭lled with exciting possibilities, albeit accompanied by challenges. The industry’s concerted e몭orts in overcoming these hurdles promise a future where generative AI technology becomes an integral part of our everyday lives. Ready to transform your business with generative AI? Contact LeewayHertz today

- 40. Ready to transform your business with generative AI? Contact LeewayHertz today and unlock the full potential of robust generative AI solutions tailored to meet your speci몭c needs! Author’s Bio Akash Takyar CEO LeewayHertz Akash Takyar is the founder and CEO at LeewayHertz. The experience of building over 100+ platforms for startups and enterprises allows Akash to rapidly architect and design solutions that are scalable and beautiful. Akash's ability to build enterprise-grade technology solutions has attracted over 30 Fortune 500 companies, including Siemens, 3M, P&G and Hershey’s. Akash is an early adopter of new technology, a passionate technology enthusiast, and an investor in AI and IoT startups. Write to Akash Start a conversation by filling the form

- 41. Once you let us know your requirement, our technical expert will schedule a call and discuss your idea in detail post sign of an NDA. All information will be kept con몭dential. Name Phone Company Email Tell us about your project Send me the signed Non-Disclosure Agreement (NDA ) Start a conversation Insights

- 42. Redefining logistics: The impact of generative AI in supply chains Incorporating generative AI promises to be a game-changer for supply chain management, propelling it into an era of unprecedented innovation. From diagnosis to treatment: Exploring the applications of generative AI in healthcare Generative AI in healthcare refers to the application of generative AI techniques and models in various aspects of the healthcare industry. Read More Medical Imaging Personalised Medicine Population Health Management Drug Discovery Generative AI in Healthcare Read More

- 43. LEEWAYHERTZPORTFOLIO About Us Global AI Club Careers Case Studies Work Community TraceRx ESPN Filecoin Lottery of People World Poker Tour Chrysallis.AI Generative AI in finance and banking: The current state and future implications The 몭nance industry has embraced generative AI and is extensively harnessing its power as an invaluable tool for its operations. Read More Show all Insights

- 44. Privacy & Cookies Policy SERVICES GENERATIVE AI INDUSTRIES PRODUCTS CONTACT US Get In Touch 415-301-2880 info@leewayhertz.com jobs@leewayhertz.com 388 Market Street Suite 1300 San Francisco, California 94111 Sitemap Generative AI Arti몭cial Intelligence & ML Web3 Blockchain Software Development Hire Developers Generative AI Development Generative AI Consulting Generative AI Integration LLM Development Prompt Engineering ChatGPT Developers Consumer Electronics Financial Markets Healthcare Logistics Manufacturing Startup Whitelabel Crypto Wallet Whitelabel Blockchain Explorer Whitelabel Crypto Exchange Whitelabel Enterprise Crypto Wallet Whitelabel DAO ©2023 LeewayHertz. All Rights Reserved.